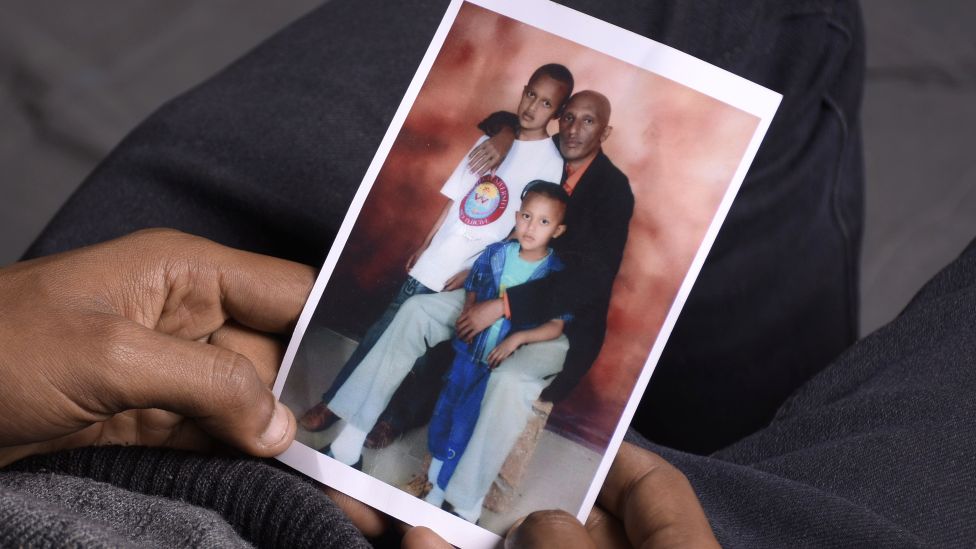

When Ethiopian university student Moti Dereje logged into his Facebook account in late November 2018, he was expecting to see the usual updates from his friends and family.

Instead, the 19-year-old – who was studying in the capital, Addis Ababa – saw a shocking post.

“As I refreshed my news feed, I saw my father’s dead body lying there,” he told the BBC’s The Comb podcast.

Not only was Mr Moti devastated by seeing such a horrific image, but this was how he found out that his father had been killed.

“I kind of froze at that moment. It was really shocking for me,” he says.

With Ethiopia experiencing political unrest in various regions over the last few years, social media has been flooded with graphic images and videos, disinformation and posts inciting violence.

Activists have been calling for more to be done to moderate the platforms and take down such content before it causes harm.

For Mr Moti, rather than seeing the picture taken down, he started to see more posts showing the same image.

His father, a former MP who was working as a university administrator, was the target of a political killing in western Oromia.

The region was going through a period of turmoil at the time with frequent assassinations.

“It’s like they were celebrating killing him. It was actually painful,” Mr Moti explains.

The BBC has seen 15 of these posts, which Mr Moti says he reported to Facebook in the hope that they would be taken down.

Facebook’s Community Standards state it will remove “videos and photos that show the violent death of someone when a family member requests its removal”.

But for over four years, and after Mr Moti says he reported the posts multiple times, they stayed online.

Only a graphic content warning was applied to some of the pictures.

“Sometimes I get depressed and I can’t stop my hand searching his name and checking out the posts. I’m kind of traumatised I think,” Mr Moti says.

When the BBC approached Facebook’s parent company Meta for comment, it agreed that while the pictures alone did not violate its policy on violent and graphic content, it would take down the posts because of a family member’s request.

All the posts showing the picture of Mr Moti’s dead father have now been removed.

There is currently no mechanism in Facebook’s reporting system for family members to make these requests, but Meta told the BBC that it was testing a new form to allow for this.

‘Ethiopian content ignored’

Concern has been mounting about the volume of graphic content and hate speech being shared on Ethiopian social media.

This was a problem prior to 2020, but when the war in the northern Tigray region broke out in November of that year, the amount of violence making its way into people’s newsfeeds increased dramatically.

In 2021 Facebook’s Oversight Board recommended that the company commission an independent investigation for Ethiopia specifically, to review how the platform has been used to spread hate speech and add to the violence.

This review has not been undertaken. The BBC asked Meta about this, and a spokesperson highlighted a previous response from the company, saying it would assess the feasibility of such a review – and that it had previously conducted multiple forms of human rights due diligence related to Ethiopia.

Last year it was announced that Meta was being sued by two Ethiopians who allege Facebook’s algorithm helped fuel the viral spread of hate and violence.

Meta responded saying it invested heavily in moderation and technology to remove hate.

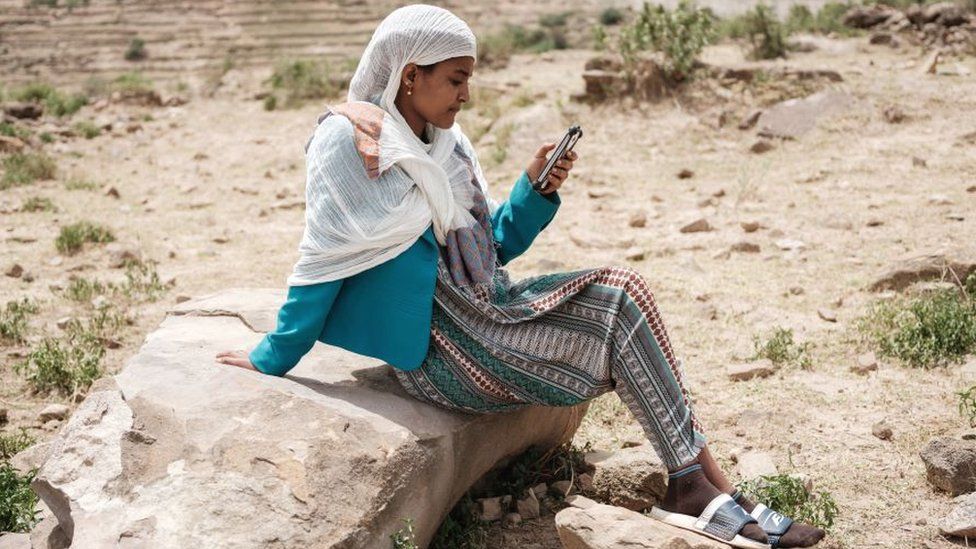

But Rehobot Ayalew, a fact-checking consultant based in Addis Ababa, questions how much is being done by social media companies to address the problem.

“I don’t think the platforms are giving that much attention to Ethiopia,” she told The Comb.

“I know Facebook says that it has moderators who focus on Ethiopia, who are Amharic speakers and Tigrinya speakers, but we don’t know how many. And they are not even working from Ethiopia, they are working in Kenya.”

The impact of this, according to Ms Rehobot, is that violent content often stays online far too long.

“It usually takes a long time for a toxic post to be taken down,” she says.

‘Anger whipped up’

Ms Rehobot references a video which was shared widely in March 2022, in which a Tigrayan man was burned alive.

“First of all, the artificial intelligence by itself should have removed it,” she explains.

“Then even after it gets reported, it should have been removed quickly, but it stayed for some hours.”

A Meta spokesperson told the BBC: “We have strict rules which outline what is and isn’t allowed on Facebook and Instagram. Hate speech and incitement to violence are against these rules and we invest heavily in teams and technology to help us find and remove this content.

“We employ staff with local knowledge and expertise, and continue to develop our capabilities to catch violating content in the most widely spoken languages in the country, including Amharic, Oromo, Somali and Tigrinya.”

Some good can come when public attention is drawn to heinous human rights abuses, but content like this can also be used to whip up anger and place blame on specific ethnic groups or individuals.

All the while the traumatic effects of viewing such content is felt by thousands, if not millions, of Ethiopians.

In November last year a peace deal was signed with the aim of bringing an end to the war in Tigray and starting to address the dire humanitarian situation it has caused.

Estimates of the number of people killed are in the hundreds of thousands, with the majority of those deaths caused by hunger and lack of medical supplies as a result of the fighting.

Meanwhile unrest still rocks the Oromia region, which has seen a growing rebellion.

Activists fear that reconciliation in these cases will be hampered by the violence that haunts social media.

“People tend to believe [the posts] and act upon them, so widespread violent and destructive images can surely slow the process of peace and reconciliation,” says Ms Rehobot.

Now aged 23, Mr Moti is trying to move on with his life, pursuing a career as a videographer and photographer, and is even making a documentary about his own experience.

“I think it is the time I tell my own story trying to fight back and maybe, if I succeed, maybe I can bring a change,” he says.

But he says the prevalence of graphic content will trouble him until it is brought under control.

“Whenever I see a dead body posted online or on Facebook due to the Ethiopian war, I really feel saddened. Somebody’s going to be feeling what I’ve been through.

“It really kills me.”